Introduction

Definition first: an autonomous mobile robot is a vehicle; its mind is the control stack that plans, senses, and negotiates work. Robotics software sits in that mind, coordinating paths, queues, and handoffs with people and machines. Picture a shift change in a mixed factory. Pallets stack up, aisles narrow, and time ticks away. Managers see the same story each day.

Many sites report double-digit idle minutes per hour, much of it caused by stalled handoffs or maps that fall out of date. Data also shows that most faults are not hardware faults. They are workflow and integration faults. If so, why do some AMR fleets flow while others stutter? Is it the map, the rules, the links to WMS or PLCs—or all of them at once (often, yes)? Let us compare old and new ways with care. We start with friction. Then we move to what comes next.

The Hidden Friction That Slows AMR Rollouts

Why do legacy stacks stall?

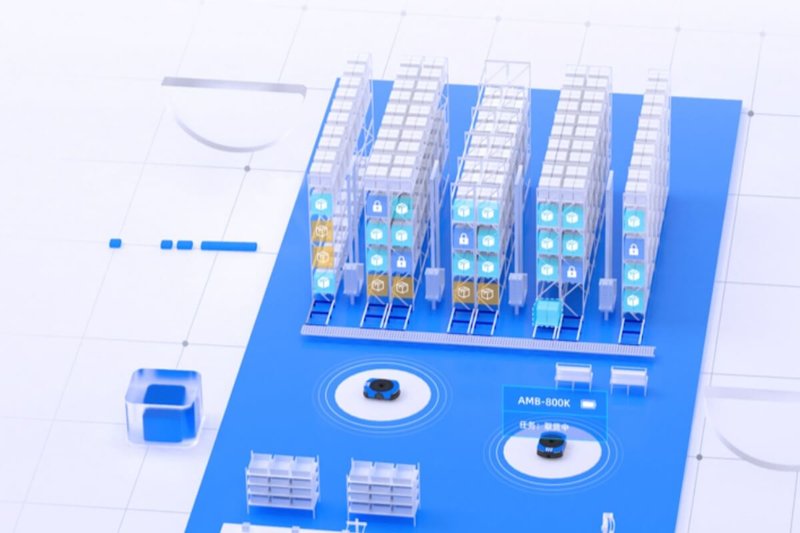

Here is the direct point: most delays are not in the motors. They live in software. Modern fleets depend on robotic amr software to align maps, jobs, and traffic rules. But many teams still bolt robots onto a warehouse as if it were static. SLAM maps drift when aisles shift. LiDAR sees a new rack, but the map lags a week. Edge computing nodes run outdated path rules. The MQTT broker floods, so QoS drops. The result is queue jitter and odd detours. Look, it’s simpler than you think: a stable map, clean topics, and predictable priorities fix half the “mystery stops.” Yet legacy stacks hide these knobs—or make them brittle.

Integration hurts too. A WMS says “move carton,” while a PLC gate says “closed,” and the fleet orchestrator cannot reconcile both in time. Old APIs force poll-and-wait. No event bus, no policy layer. Charging plans ignore power converters and duty cycles, so a third of the fleet lines up at the same dock—funny how that works, right? Human-in-the-loop is also weak. Operators need to nudge missions or set soft zones fast. Many tools bury that under menus. Standards like VDA5050 help, but adapters vary in quality. All these pain points are subtle. They do not show in a demo. They show under load, on Monday morning.

Comparing Old Rules to New Principles

What’s Next

Let us shift to a forward view, and be technical about it. Newer robotic amr software flips the model from rigid routes to policy-driven flow. Think event-driven orchestration: jobs publish intents; robots subscribe based on capability, state of charge, and local congestion. A graph planner updates live, not nightly. SLAM maps merge with semantic layers, so a “no-go at 3 pm” is a rule, not a manual edit. Edge computing nodes run the traffic core, while the cloud handles fleet-wide learning and simulation. This cuts latency where it matters and keeps the big brain for what is global. Add a digital twin to rehearse layout changes before tape hits the floor. And use typed connectors to WMS/MES so exceptions flow as events, not emails.

The comparative result? Fewer deadlocks, smoother handoffs, and predictable charge scheduling. LiDAR and vision fuse to classify obstacles, so a person vs. pallet gets different yields. Mission swaps happen mid-route with bounded delay. Operators see primitives like “escort,” “follow,” or “stage,” not raw coordinates. And when the radio gets noisy, a local fallback keeps safety and progress. Costs shift too. You buy less custom glue code and more reusable policies. In short, the stack learns the plant, not the other way around—and your team stops firefighting. Now, how should you choose among the options?

Advisory: Three Metrics That Keep You Honest

First, measure time to first stable mission across shifts (not a demo hour, but a full day with workers, gates, and carts). Second, track mission success rate under load with live integrations, including WMS/MES and PLC interlocks, plus mean time to recover from a block. Third, evaluate observability depth: can you inspect topics, priorities, maps, and policies in one pane, and change them without redeploy? If a platform clears these bars, you gain control of the flow, not just the hardware. And if you test, test with your real edge cases—rush orders, narrow aisles, and partial outages. That is where truth hides.

Summing up, old stacks were route-first and system-second; new stacks are policy-first and plant-aware. The difference shows in Monday mornings, not launch day. Choose with your data, your floor, and your people in mind—and leave room to adapt as lines change. If you need a place to start a shortlist, study teams that publish real telemetry and failure modes. They tend to build tools worth keeping — and they tend to answer hard questions fast. SEER Robotics